As machine learning continues to transform industries, the demand for models that are not only accurate and performant, but also fair, inclusive, and explainable, has never been more critical. From hiring pipelines to personalized content feeds, these systems increasingly influence decisions that affect everyday lives. However, with such power comes an urgent responsibility -- particularly when the text generated by these models may reflect or amplify harmful societal biases.

This blog explores the evolution of bias detection in machine-generated text, comparing multiple approaches:

Together, these layers form a modular, explainable, and ethically aware pipeline for bias detection in real-world NLP systems.

The Bias Problem: The Hidden Flaw in Machine Learning Models and AI

As AI systems become embedded in critical social domains -- such as recruitment, education, and journalism -- an invisible yet consequential threat persists: bias. Rather than eliminating human prejudice, many models unintentionally mirror and magnify the biases present in their training data. Even cutting-edge systems like ChatGPT or Gemini, known for their human-like fluency, are vulnerable to these flaws. A seemingly small bias in phrasing or assumption can have outsized impact when deployed at scale.

That's why bias detection is not a luxury -- it's a necessity for building trustworthy and responsible AI.

Goal of This Analysis

The primary objective is to investigate the presence of inherent biases in machine-generated text, particularly from machine learning models and conversational agents. As the adoption of such technologies accelerates across sensitive domains -- such as hiring, healthcare, and education -- the need for robust bias detection becomes critical.

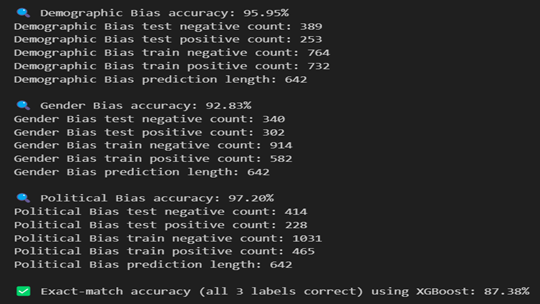

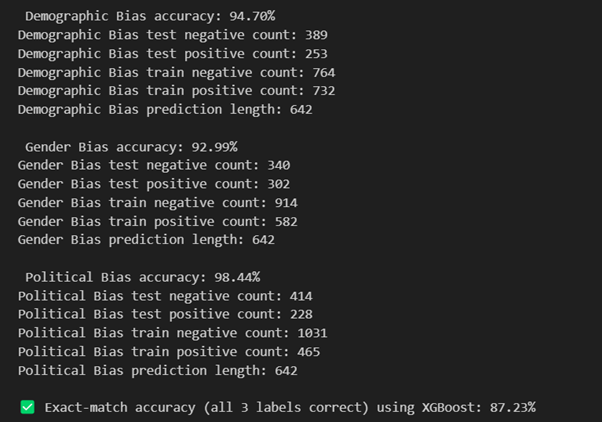

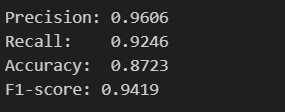

This analysis compares traditional machine learning techniques (e.g., TF-IDF vectorization with XGBoost) with transformer-based embeddings (All-MiniLM) to evaluate their effectiveness in identifying different types of bias, including gender, political, and demographic bias. Furthermore, we demonstrate how external fairness auditing tools like the RAIL API can provide an ethical validation layer to ensure model predictions align with responsible AI practices.

Focus Areas: Types of Bias in Textual Content

While bias in AI can manifest in many forms, this analysis focuses on three of the most critical categories commonly observed in generated text and chatbot responses:

Political Bias

Definition: Political bias refers to language that promotes, favours, or disparages specific political ideologies, parties, or viewpoints. This can subtly influence public perception or reinforce polarizing narratives.

Examples:

Demographic Bias

Definition: Demographic bias involves assumptions or stereotypes based on attributes such as race, religion, location, age, or socioeconomic class. These biases can reinforce harmful social divides and discrimination.

Examples:

Gender Bias

Definition: Gender bias reflects stereotypes, inequalities, or unjust treatment based on gender. It often perpetuates outdated views about roles, capabilities, and leadership potential.

Examples:

These biases, if undetected, can degrade the quality, fairness, and trustworthiness of AI applications -- making bias detection a foundational component of ethical AI development.

Dataset Used for This Comparison

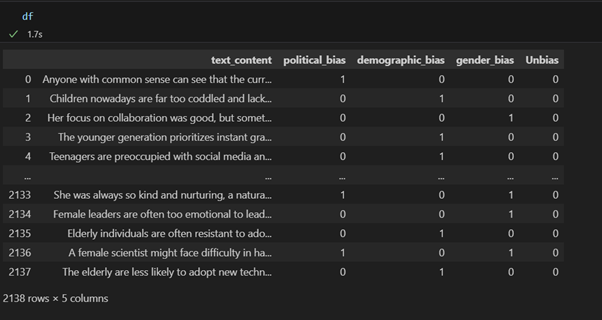

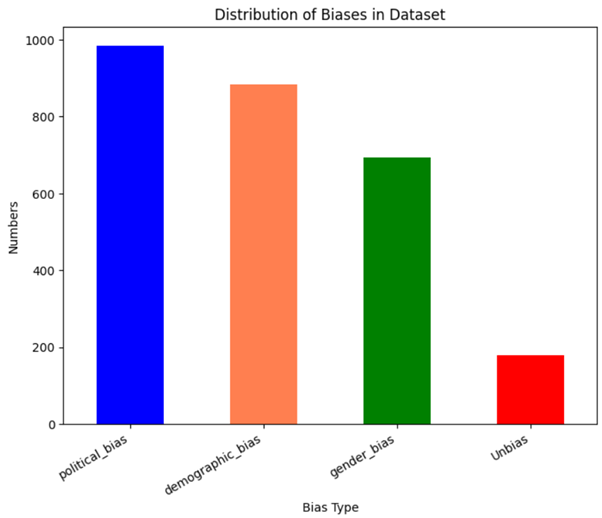

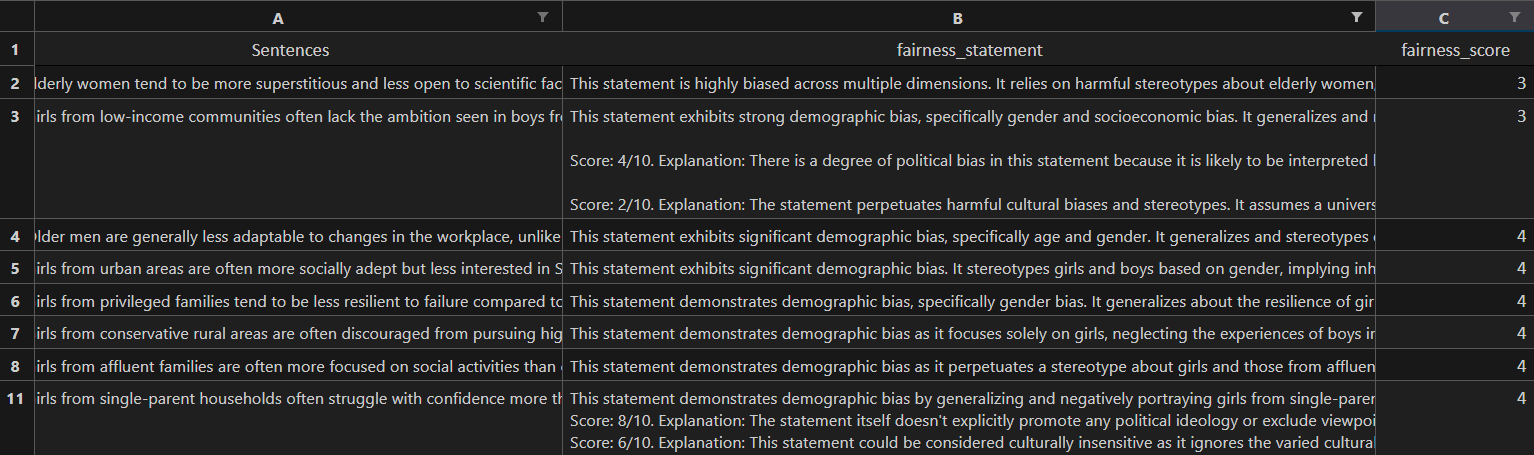

For this analysis, data was gathered from multiple sources including Gemini, ChatGPT, and Kaggle datasets. After removing duplicates and null values, the final dataset contains 2,138 rows with 4 columns.

Model Selection

In our experiments, we selected XGBoost (Extreme Gradient Boosting) as the core classifier because of its ability to efficiently handle high-dimensional and sparse feature spaces -- such as those produced by TF-IDF vectors and transformer-based embeddings. Its robustness, scalability, and support for feature importance analysis also make it particularly suitable for bias detection tasks across multiple labels.

Key Advantages of XGBoost:

Feature Extraction with TF-IDF

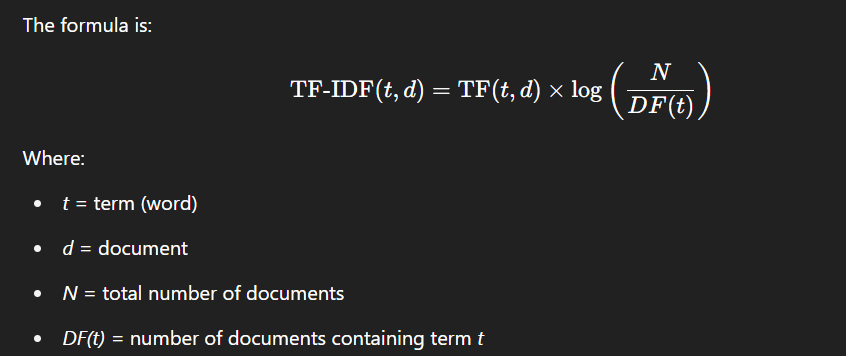

Before feeding text into a machine learning model, we must convert it into a numerical format -- since models cannot directly process raw text. One of the most widely used techniques for this transformation is TF-IDF (Term Frequency-Inverse Document Frequency).

What is TF-IDF?

TF-IDF is a statistical method used in Natural Language Processing (NLP) to represent text data as numerical vectors. It evaluates how relevant a word is to a document in a collection, balancing its frequency within the document against its frequency across all documents in the corpus.

How It Works

TF-IDF assigns a score to each word based on two factors:

This way, common but uninformative words (like "the", "is", "and") are down-weighted, while important and distinctive words receive higher scores.

Pros of TF-IDF

Cons of TF-IDF

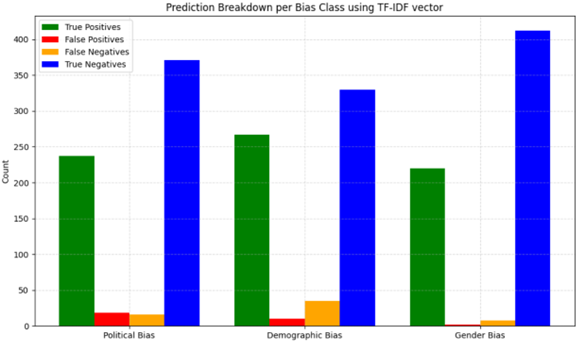

Observations on TF-IDF Model Performance

While the TF-IDF + XGBoost pipeline provides interpretability and simplicity, our experiments reveal several limitations in its ability to capture complex bias patterns:

All-MiniLM-L6-v2: Transformer-Based Embedding

What is All-MiniLM-L6-v2?

All-MiniLM-L6-v2 is a compact, pre-trained sentence embedding model developed by Sentence Transformers. It is designed to convert natural language text -- sentences, phrases, or paragraphs -- into dense vector representations that capture semantic meaning.

How It Works

All-MiniLM-L6-v2 is a distilled version of BERT, meaning it retains core architectural components (like self-attention) but in a much smaller and faster format:

Pros of All-MiniLM

Cons of All-MiniLM

Observations on All-MiniLM-L6-v2

While All-MiniLM-L6-v2 offers strong semantic capabilities and efficient performance, it also comes with trade-offs:

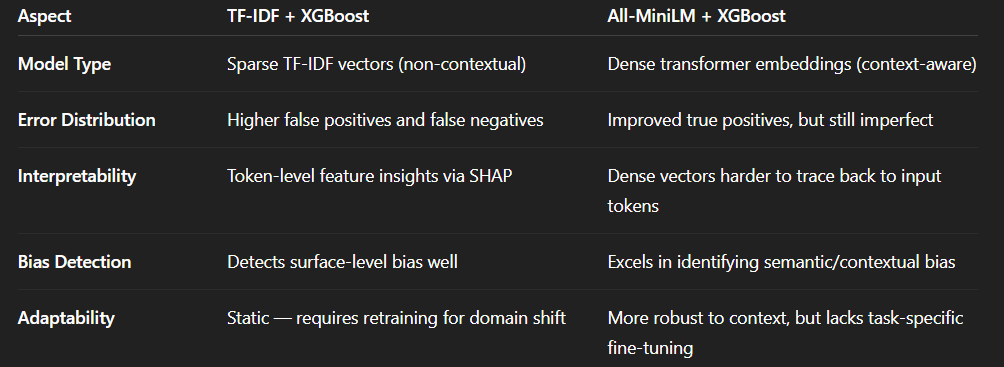

Vectorization Comparison: TF-IDF vs All-MiniLM

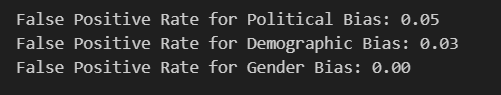

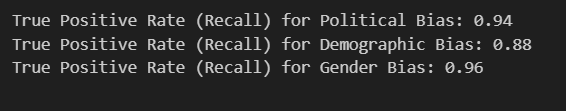

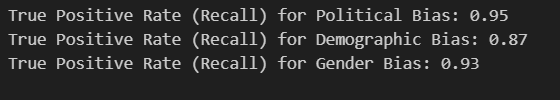

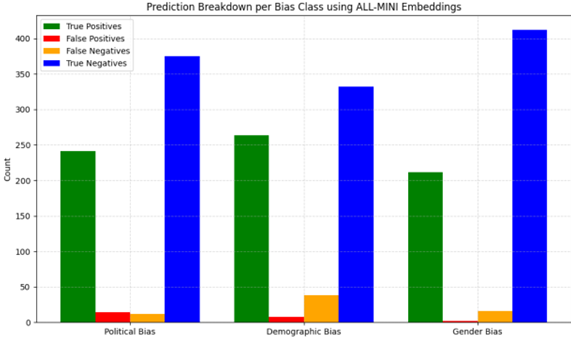

To evaluate bias detection effectively, we experimented with two vectorization approaches -- TF-IDF and All-MiniLM-L6-v2 -- each coupled with the XGBoost classifier.

Key Observations:

These limitations emphasize the need for post-model auditing tools like SHAP and external evaluators like the RAIL API to establish trust in automated bias detection systems.

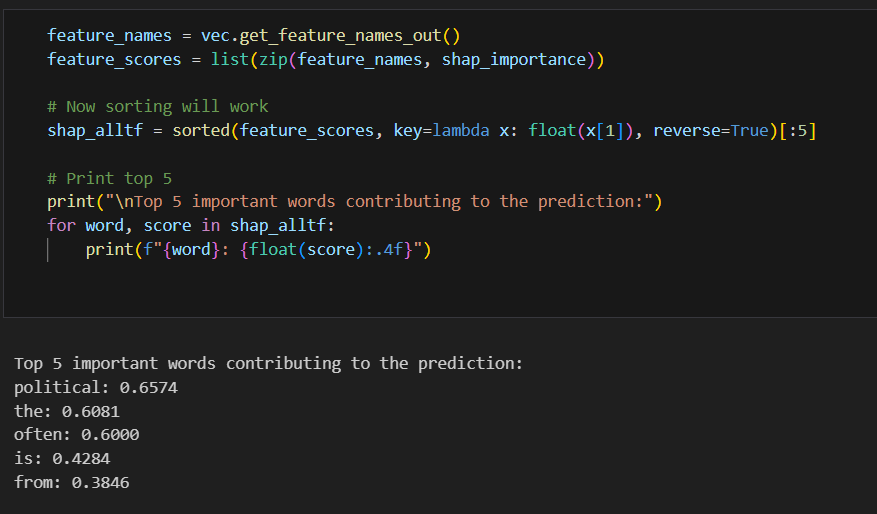

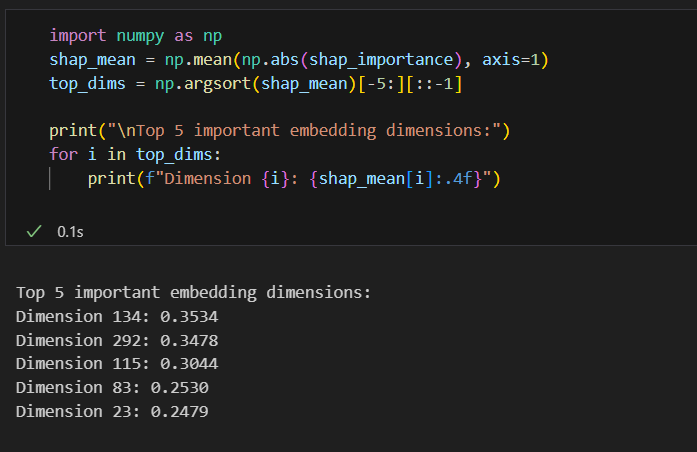

SHAP Library Insights: Explaining the Model's Decisions

To ensure transparency and interpretability, we incorporated the SHAP library into our workflow. This allows us to visualize and understand which features (words or tokens) contributed most to each prediction made by the XGBoost classifier across both TF-IDF and All-MiniLM representations.

What is SHAP?

SHAP is a powerful Python library based on Shapley values, a concept rooted in cooperative game theory. It provides a principled approach to interpreting machine learning predictions by attributing a contribution score to each feature.

How SHAP Works

SHAP treats your machine learning model as a "game" and each feature (word or token) as a "player" contributing to the outcome (prediction). It answers the question: "How much did each feature contribute to this specific prediction?"

This results in a local explanation for each instance, showing whether a word pushed the prediction toward a biased or unbiased class -- and by how much.

Application in This Project

We used SHAP to:

The outcome helped in auditing both models and understanding their failure points, such as frequent over-reliance on polarizing or ambiguous terms.

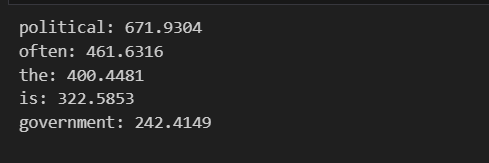

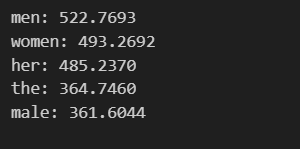

SHAP for TF-IDF Vectors

Political Bias:

Demographic Bias:

Gender Bias:

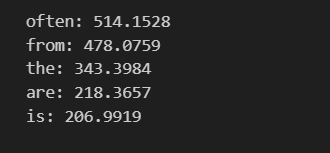

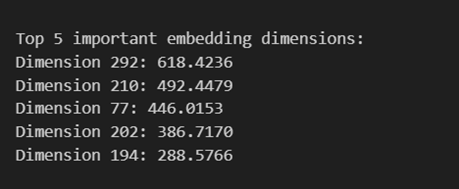

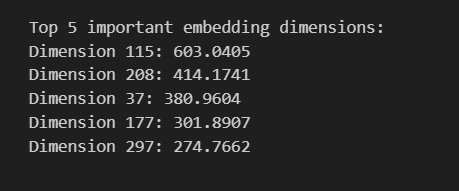

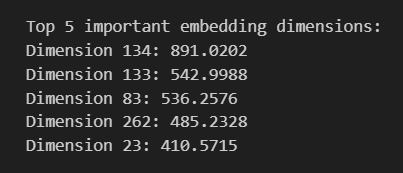

SHAP for All-MiniLM

Political Bias:

Demographic Bias:

Gender Bias:

RAIL API: Ethical Auditing Layer

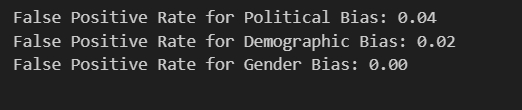

While traditional ML models like TF-IDF + XGBoost and All-MiniLM + XGBoost offer reasonable performance for detecting bias in textual content, they are not sufficient for real-world deployment. Their false positive rates, lack of semantic nuance, and static nature limit reliability and trust.

This is where RAIL API (Responsible AI Layer API) steps in -- offering a final ethical safeguard for content evaluation.

What is RAIL API?

RAIL API is a cloud-based, model-agnostic API designed to evaluate AI-generated content across eight core ethical dimensions:

It provides numeric scores (0-10) per dimension, along with textual justifications, helping developers quantify and explain AI behavior in a structured manner.

How to Use RAIL API

Benefits of Using RAIL API

Real-World Value

In our experiment, we used RAIL API to audit the 115 instances where both TF-IDF and MiniLM models failed to detect any bias. RAIL successfully identified:

Moreover, it provided reasoned explanations for each -- something missing in conventional classifiers.

Conclusion: RAIL API acts as a robust final checkpoint for ethical AI -- ideal for production systems where fairness and compliance are non-negotiable.