As AI systems evolve from processing text alone to integrating vision, audio, and video, a troubling pattern is emerging: bias doesn't just carry over into multimodal systems - it compounds. This article examines how prejudice enters and amplifies within vision-language models, why the research community has been slow to address it, and what organizations can do to build fairer multimodal AI.

The multimodal moment - and its hidden risks

The AI landscape has shifted dramatically. In 2026, the most capable models are no longer text-only: systems like GPT-5, Gemini 3, Claude 4, and open-source alternatives such as InternVL3 and Qwen3-VL process images, documents, charts, and video alongside natural language. Benchmarks like MMMU Pro now evaluate multimodal reasoning across 30+ academic disciplines, and leading models score above 70% on tasks that combine visual perception with logical inference.

But as these models grow more powerful, a critical question remains under-studied: are multimodal AI systems fair?

A landmark 2026 paper in Frontiers in Big Data by Ahmad, Vallès, and Idaghdour put it starkly: vision-language models "encode and amplify demographic biases across modalities." Adding a new input type - say, medical images alongside clinical text - can improve a system's overall accuracy while simultaneously making its fairness outcomes worse for specific demographic groups. This paradox - better aggregate performance masking deeper inequity - sits at the heart of the multimodal fairness challenge.

How bias enters multimodal systems

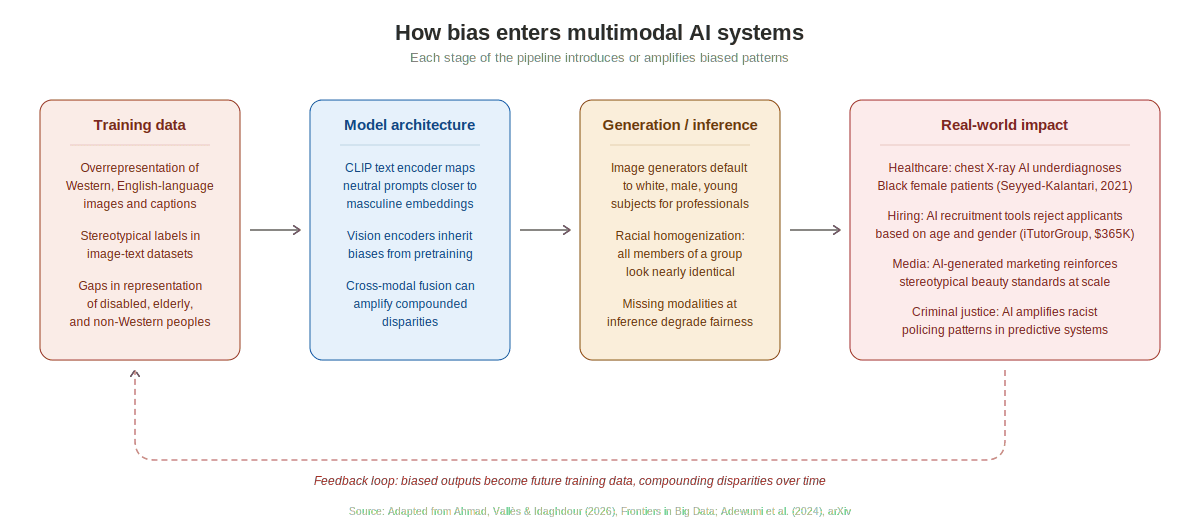

Unlike traditional text-only language models, multimodal systems have more entry points for bias. Prejudiced patterns can infiltrate at every stage of the AI pipeline - from the data on which models are trained, through their architectural design, all the way to how they generate outputs.

Figure 1: The multimodal bias pipeline. Stereotypical training data, biased model architectures, and flawed inference patterns create a feedback loop that compounds disparities over time.

Training data: the foundation of the problem

Most large-scale image-text datasets are dominated by English-language content from Western internet sources. The LAION-5B dataset - widely used for training text-to-image models - contains 2.3 billion English-language pairs but pools over 100 other languages into a secondary collection of roughly equal size. This linguistic imbalance means that when a model encounters a prompt like "a doctor," its internal representation defaults to what Western, English-language internet images depict: typically a white male in a lab coat.

The problem extends beyond language. A 2025 content analysis published in Taylor & Francis found that AI-generated portraits of STEM professionals were "almost exclusively depicting male, white, and older individuals." In the underlying training data, image captions often carry stereotypical associations - linking certain professions, attributes, and settings to specific demographic groups - that the model absorbs wholesale.

Model architecture: where bias amplifies

The most popular multimodal architectures rely on a shared embedding space - typically trained through contrastive learning approaches like CLIP - to align text and visual representations. Research published in January 2025 in Journal of Imaging demonstrated that CLIP's text encoder maps neutral prompts (like "a person") significantly closer to masculine embeddings than to feminine ones. This means that before a single image is generated, the model's internal geometry already carries a gender bias.

When vision encoders are coupled with language models, these biases can compound. A 2025 study by Sampath et al. showed that while adding new modalities during training improved overall predictive accuracy, "disparities in fairness metrics - such as true positive rates and demographic parity - can persist or even increase depending on the evaluation setting." In medical AI specifically, state-of-the-art chest X-ray models have been shown to systematically underdiagnose Black female patients despite achieving expert-level performance on aggregate metrics.

Generation and inference: bias made visible

At inference time, the biases baked into data and architecture become strikingly visible. Bloomberg's analysis of over 5,000 AI-generated images found that images associated with higher-paying job titles consistently featured people with lighter skin tones, while results for most professional roles were male-dominated. When asked to generate images of "a terrorist," Stable Diffusion consistently rendered men with dark facial hair wearing head coverings - directly reflecting and amplifying stereotypes.

A Nature Scientific Reports study from April 2025 documented "significant racial homogenization" in Stable Diffusion's outputs: the model depicted nearly all Middle Eastern men as bearded, brown-skinned, and wearing traditional attire, flattening the diversity of an entire demographic into a single visual template.

Perhaps most concerning: when a modality goes missing at inference time - for instance, when an image is unavailable and the model falls back on text alone - fairness degrades disproportionately for already-marginalized groups.

The research gap: 16 times less attention

Despite the scale of the problem, the academic community has been slow to study multimodal fairness relative to text-only systems.

Figure 2: A 2024 survey found 16× fewer studies on fairness in multimodal models compared to text-only LLMs. The disparity is even more pronounced in curated databases like Web of Science.

A 2024 survey by Adewumi et al. (arXiv:2406.19097) searched Google Scholar for "fairness and bias in Large Multimodal Models" versus "fairness and bias in Large Language Models." The results were dramatic: 538,000 results for text-only LLMs versus just 33,400 for multimodal systems - a roughly 16-fold gap. On the more curated Web of Science, the ratio was even starker: 50 results versus just 4.

This disparity matters because multimodal systems are increasingly deployed in high-stakes contexts - healthcare diagnostics, hiring assessments, content moderation, and public safety - where biased outputs have direct, material consequences for people's lives.

The New Stack noted in a February 2026 feature that "biases are further compounded in multimodal systems, compared to unimodal approaches," making the research gap not just an academic concern but a societal one.

Real-world impact: from hiring to healthcare

The consequences of multimodal AI bias are not hypothetical. Several high-profile cases illustrate the stakes:

Employment discrimination. A U.S. Equal Employment Opportunity Commission lawsuit revealed that iTutorGroup's AI recruitment software automatically rejected female applicants aged 55+ and male applicants aged 60+. Over 200 qualified individuals were disqualified solely on the basis of age, resulting in a $365,000 settlement. In May 2025, a federal judge allowed a collective action lawsuit to proceed in a similar case, marking a new legal milestone.

Medical misdiagnosis. Multimodal foundation models combining medical images with clinical text have been shown to underdiagnose historically marginalized subgroups. A 2021 study by Seyyed-Kalantari et al. demonstrated that chest X-ray AI models systematically underperformed on Black female patients - a finding that remains relevant as these architectures scale into more clinical applications.

Visual media at scale. With AI-generated images now exceeding 34 million per day as of late 2023, biased visual outputs are reshaping the media landscape. Brookings Institution research showed that text-to-image models consistently default to depicting "successful people" as white, male, young, and dressed in Western business attire - stereotypes that flow directly into advertising, stock photography, and corporate communications.

Disability erasure. Stanford researchers found that when asked to generate an image of "a disabled person leading a meeting," DALL-E instead produced an image of a person in a wheelchair watching someone else lead - reflecting an ableist assumption that disabled individuals cannot hold authority. A 2025 University of Melbourne study further found that AI hiring tools struggled to accurately evaluate candidates with speech disabilities or non-native accents.

A framework for fairer multimodal AI

Addressing multimodal bias requires intervention at every stage of the AI lifecycle. Drawing on recent research, a three-stage mitigation framework has emerged.

Figure 3: A lifecycle approach to multimodal fairness, spanning data preparation, model training, and post-deployment monitoring.

Pre-processing: fix the data first

The most fundamental step is diversifying and auditing training datasets. This means curating culturally representative image-text pairs across multiple languages, reviewing and correcting stereotypical annotations, and documenting known demographic gaps through model cards and datasheets. As one Carnegie Mellon researcher noted, human annotators "bring their own biases and very stereotypical views" into the labeling process - making annotator diversity as important as data diversity.

In-processing: build fairness into training

During model training, fairness-aware loss functions can constrain outputs toward equalized odds or demographic parity. Adversarial debiasing techniques train secondary networks to strip protected-attribute signals from shared embeddings. Critically, cross-modal alignment layers (like CLIP) need specific debiasing attention, since research has shown that gender bias originates in the text embedding and propagates through the entire generation process.

A promising new approach - the "Flare" framework described in a March 2026 arXiv paper - achieves ethical fairness without requiring explicit demographic attributes, using latent subgroup detection to improve equity across hidden demographic clusters.

Post-processing: monitor and audit in production

After deployment, continuous monitoring of fairness KPIs (true positive rates, false positive rates, demographic parity ratios) across all modalities is essential. Third-party red-teaming, using diverse and adversarial test sets, can surface biases that internal testing misses. Output-level interventions - such as inference-time prompt engineering that introduces diversity into generated images - can serve as a stopgap while more fundamental fixes are implemented.

A growing ecosystem of tools supports this work: Google's Explainable AI platform, IBM's AI Fairness 360, Microsoft's Fairlearn, and newer benchmarks like HEIM, ViSAGe, and the Social Stereotype Index (SSI) all offer frameworks for measuring and mitigating multimodal bias.

The regulatory landscape

Governments worldwide are beginning to address AI fairness through legislation, though approaches vary widely.

Figure 4: Regulatory approaches to AI fairness across major jurisdictions as of early 2026.

The EU AI Act - the most comprehensive framework - classifies AI systems by risk level and imposes mandatory conformity assessments, red-teaming requirements, and transparency obligations on high-risk systems, with enforcement ramping up through 2027.

South Korea's AI Framework Act, effective January 2026, mandates fairness and non-discrimination across high-impact sectors including healthcare and public services, with administrative fines up to approximately $21,000. Japan's AI Basic Act, passed in May 2025, takes a softer approach: requiring avoidance of biased training data and fairness audits, but enforcing compliance through public naming rather than monetary penalties.

The United States remains a patchwork. The Biden-era AI Executive Order has been rolled back, and no comprehensive federal law exists. However, state and local measures - such as New York City's Local Law 144 requiring bias audits for AI hiring tools - are creating a fragmented compliance landscape that organizations must navigate.

Singapore has opted for a voluntary, innovation-first model, with its Model AI Governance Framework providing guidelines rather than binding requirements.

For organizations deploying multimodal AI across borders, this divergent regulatory environment means that fairness and bias mitigation are not just ethical imperatives - they are legal and business necessities.

What comes next

The multimodal fairness challenge is, in many ways, still in its early days. As models increasingly process video, 3D spatial data, and real-time sensor inputs, the attack surface for bias will only grow. Several priorities stand out for the research and practitioner communities:

Close the research gap. The 16× disparity in fairness studies between text-only and multimodal systems must narrow. Funders, journals, and conference organizers should prioritize multimodal fairness as a research area.

Develop multimodal-specific benchmarks. While MMMU Pro and similar benchmarks evaluate capability, few benchmarks systematically test fairness across modalities. Tools like ViSAGe and the Social Stereotype Index point the way, but broader adoption is needed.

Mandate cross-modal fairness audits. Organizations deploying multimodal AI in high-stakes domains should conduct fairness evaluations that test each modality independently and in combination - since bias can emerge from modal interactions that are invisible when testing one modality at a time.

Invest in diverse data infrastructure. The root cause of multimodal bias is data imbalance. Industry-wide initiatives to create and share culturally diverse, multilingual, and demographically representative training datasets would have an outsized impact.

Take feedback loops seriously. As biased AI outputs circulate on the internet and become future training data, a self-reinforcing cycle of bias is already underway. Breaking this cycle requires both technical interventions (like filtering AI-generated content from training pipelines) and broader media literacy efforts.

Conclusion

Multimodal AI represents a genuine leap forward in machine intelligence - enabling systems to see, read, and reason in ways that were science fiction just a few years ago. But that same power makes the stakes of getting fairness wrong commensurately higher. When a biased text model generates a prejudiced paragraph, it can be edited. When a biased multimodal system generates 34 million stereotypical images per day, reshapes medical diagnoses, and filters job applicants across modalities - the harms compound at scale and across dimensions of human experience.

The good news is that the tools, frameworks, and regulatory structures to address multimodal AI fairness are emerging. The challenge now is matching the urgency of deployment with the rigor of fairness work.

References

This article is part of ResponsibleAI Labs' 2026 series on emerging AI ethics and risk. For more, visit responsibleailabs.com.