AI everywhere! These days, it feels like every headline, every pitch deck, and every vendor proposal is buzzing with some new "AI-powered solution." It supports doctors in diagnosing patients, powers customer service chatbots, and even writes the very articles we read. Artificial intelligence has infiltrated nearly every corner of our lives. Still, the real challenge lies in creating truly responsive AI: systems that not only respond quickly but also respond safely, fairly, and responsibly.

And yet, here's the catch: not all AI is created equal. For every breakthrough, there's also user dissatisfaction with AI assistant responses going wrong, chatbots going off-script, or systems making decisions that leave users dissatisfied. The hype is real, but so are the threats.

Some systems deliver value, while others leave us scratching our heads (or worse, cleaning up their mess).

At Responsible AI Labs (RAIL), we think that trust in AI needs to be earned. This is why we created the RAIL Score, a system to assess AI technologies based on safety, fairness, transparency, and other factors. You can view it as AI's safety belt, aimed at ensuring innovation proceeds responsibly.

In this blog, we will look into why modern AI systems require more than just speed or scale; they also need to be responsible. We will discuss what sets responsive AI apart, how the RAIL Score assesses AI based on eight important dimensions, and why this framework serves as a safety belt for businesses, developers, and users.

What Do We Mean by "Responsive AI"?

Responsive AI marks a major shift in how we engage with technology.

Previous AI systems depended on strict rules or set guidelines, which frequently fell short in unexpected situations. Responsive AI employs machine learning and data insights to quickly adjust to user inputs, preferences, and changing circumstances. It aims to create AI that comprehends human intent and alters its actions accordingly, enhancing interactions to be not just smarter but also safer and more significant.

Now, compare this with the irresponsible responses from AI. When businesses prioritize speed and profits over ethics, the outcomes can lead to harmful, biased hiring choices, unsafe healthcare recommendations, or customer service bots that annoy instead of assist. These are not merely lost chances; they undermine trust and emphasize the dangers of neglecting AI safety management.

Responsive AI is already reshaping industries:

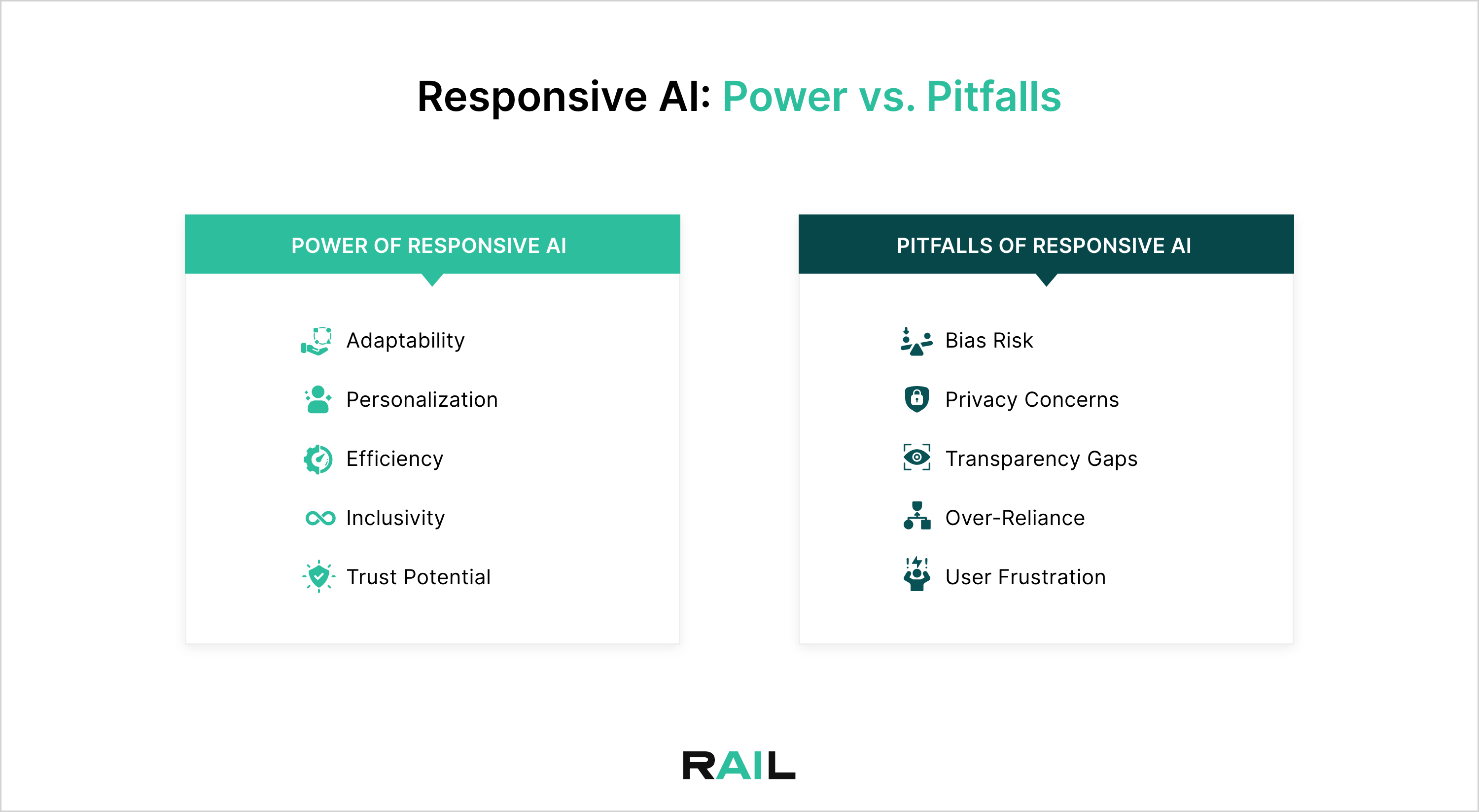

In the end, the very traits that give Responsive AI its strength can also create dangers if not managed properly. Creating genuinely responsive AI focuses on both technical efficiency and accountability.

It is clear that responsive AI has great potential, but without a standard to assess safety, fairness, and accountability, risks can go unchecked. This is why the RAIL Score is crucial.

At RAIL, we think that transparency, ethics, and accountability are essential; they form the basis for AI that adjusts while ensuring safety.

What Makes the RAIL Score AI's Safety Belt?

The RAIL Score, created by Responsible AI Labs, serves as a report card for AI systems. It assesses AI-generated content based on eight key dimensions that represent both technical performance and responsibility. As real issues in AI safety emerge every day, companies, developers, and regulators require a standard like the RAIL Score now more than ever. It functions as an AI safety belt, promoting transparency, reliability, and trust in the interactions between systems and people.

By embedding transparency and explainability into its framework, the RAIL Score helps identify risks early on, guiding businesses toward responsible deployment rather than reactive damage control.

Breaking Down the 8 Dimensions of the RAIL Score:

The RAIL Score uses a smart system that gives a score from 1 to 10 for each aspect, with weights reflecting their significance for a specific use case. It's not a one-size-fits-all rating; it's flexible, built with tools like bias detectors and toxicity filters to examine the details.

Why the RAIL Score Matters Now

AI is no longer a futuristic concept; it's already deciding who gets medical care first, approving loans, or chatting with customers online. But here's the challenge: trust is eroding. Studies show that a majority of people worry about AI spreading misinformation, and real-world risks are no longer hypothetical.

The RAIL Score is built to tackle concrete problems in AI safety, and it helps companies balance innovation with ethics. By weighing dimensions like privacy in healthcare, fairness in finance, and user impact in customer service, the RAIL Score adapts to each use case instead of offering a one-size-fits-all grade.

For businesses, it acts as a trust badge -- proof that their AI is high-performing and also transparent, inclusive, and safe at the same time. For regulators, it provides a compliance-ready tool that translates principles like transparency and explainability into measurable standards.

The RAIL Score matters now because it bridges the growing trust gap, making AI not just powerful, but also responsible, reliable, and regulation-ready.

What Are the Benefits of the RAIL Score?

The impact of the RAIL Score goes beyond theory; it delivers value across the entire AI ecosystem:

The RAIL Score enables developers, businesses, regulators, and users to create a safer and more responsive AI environment for all.

AI may be everywhere, but with tools like the RAIL Score, we can make sure it's everywhere responsibly.