Introduction

Enterprise AI reaches its real test in sustained, responsible scale. As AI expands across teams, systems, and decisions, organizations must balance performance with governance, innovation with risk, and speed with trust. The difference between success and failure lies in how well this balance is managed.

Pilot projects help organizations test AI ideas in controlled environments, but success at the pilot stage does not guarantee readiness for AI scaling in real-world enterprise operations.

Moving from experimentation to production introduces new complexities such as data volume, system integration, governance, and cost, which define the true challenge of scaling AI in the enterprise.

When done right, AI scaling enables organizations to move beyond isolated use cases and drive measurable value across functions.

However, without responsibility built in, enterprises often face growing risks, making the challenges of scaling AI solutions and scaling AI across operations impossible to ignore.

What AI Scaling Means in the Enterprise Context

Gartner predicts that by 2030, most enterprise software will feature multimodal AI capable of processing text, images, speech, and structured data simultaneously.

While this growth brings powerful new capabilities, moving from experimentation to AI scaling in an enterprise context requires far more than successful pilots. It demands stronger organization, clear governance, and operational discipline that can support AI systems over time.

At the same time, AI scaling means expanding AI across real business environments. Models must support more users, handle growing volumes of data, inform critical decisions, and deliver consistent, reliable impact across the organization.

This shift requires organizations to move deliberately from testing ideas to identifying and scaling AI use cases that align with strategic goals and measurable outcomes.

Now, AI scaling involves two types of growth:

Both types of scaling progress simultaneously. Business growth creates a need for technical advancements, while technical constraints influence business results.

What Are the Different Types of AI Scaling Laws?

Just as nature follows well-known empirical laws, the AI domain has long been guided by a similar principle. Traditionally, AI model performance was expected to improve as organizations invested in more computing power, larger datasets, and increasingly complex model architectures.

But today, it is guided by three specific laws:

Pretraining Scaling

It is the foundational principle of AI development. It showed that by enlarging the training dataset, increasing the number of model parameters, and boosting computational resources, developers could anticipate consistent enhancements in model intelligence and accuracy.

These three components — data, model size, and compute — are interconnected. According to this law, when larger models are provided with more data, their overall performance improves.

To achieve this, developers need to enhance their computing power, which necessitates robust accelerated computing resources to handle larger training tasks.

Post-Training Scaling

Pretraining scaling is a large foundation model that requires significant investment, skilled professionals, and datasets. However, once an organization pretrains and releases a model, they make it easier for others to adopt AI by allowing them to use their pretrained model as a base for their own applications.

This post-training process creates additional demand for accelerated computing within enterprises and the wider developer community. Well-known open-source models can lead to hundreds or thousands of derivative models, trained across various fields.

The post-training AI scaling law suggests that a pretrained model's performance can continue to improve even after initial training is complete.

These improvements may appear in areas such as computational efficiency, accuracy, or domain-specific performance.

Organizations achieve this through techniques like fine-tuning, pruning, quantization, model distillation, reinforcement learning, and synthetic data augmentation.

Test-time Scaling

Test-time scaling, often referred to as long thinking, occurs during inference.

This process resembles how most humans think; when asked to calculate two plus two, they give an immediate answer, without needing to explain the basics of addition or numbers.

However, if asked unexpectedly to create a business plan aimed at increasing a company's profits by 20%, a person will likely deliberate over different possibilities and offer a detailed, step-by-step response.

Unlike traditional AI models that quickly produce a single answer to a user's prompt, models that use this method invest in additional computational resources during inference. This enables them to consider various possible responses before selecting the most suitable answer.

The test-time compute strategy includes various methods, such as:

Post-training techniques like best-of-n sampling can also be applied for long thinking during inference to enhance responses in accordance with human preferences or other goals.

From Pilot to Production: Scaling AI Beyond Experiments

Many AI initiatives succeed as pilots but struggle to transition into enterprise-wide deployment. Pilot environments are designed for speed and validation, while production systems must withstand real-world complexity.

The table below outlines what usually occurs when organizations try to transition from a controlled pilot to full production:

| Key Issue | Pilot Stage | Production Stage |

|---|---|---|

| Unclear ROI | Newness generates interest, but the metrics are unclear. | Expenses rise, and advantages do not align with strategic goals. |

| Data readiness | Clean, focused datasets are utilized. | Real-world data is complicated, comes from multiple sources, and is tougher to integrate. |

| Governance gap | Pilot risk is low, and compliance checks are casual. | Bias, privacy, clarity, and regulatory supervision become essential. |

| Organizational adoption | Small groups embrace new tools. | Scaling up needs training, cultural shifts, and uniform processes. |

The Four-Pillar Framework for Scaling Enterprise AI

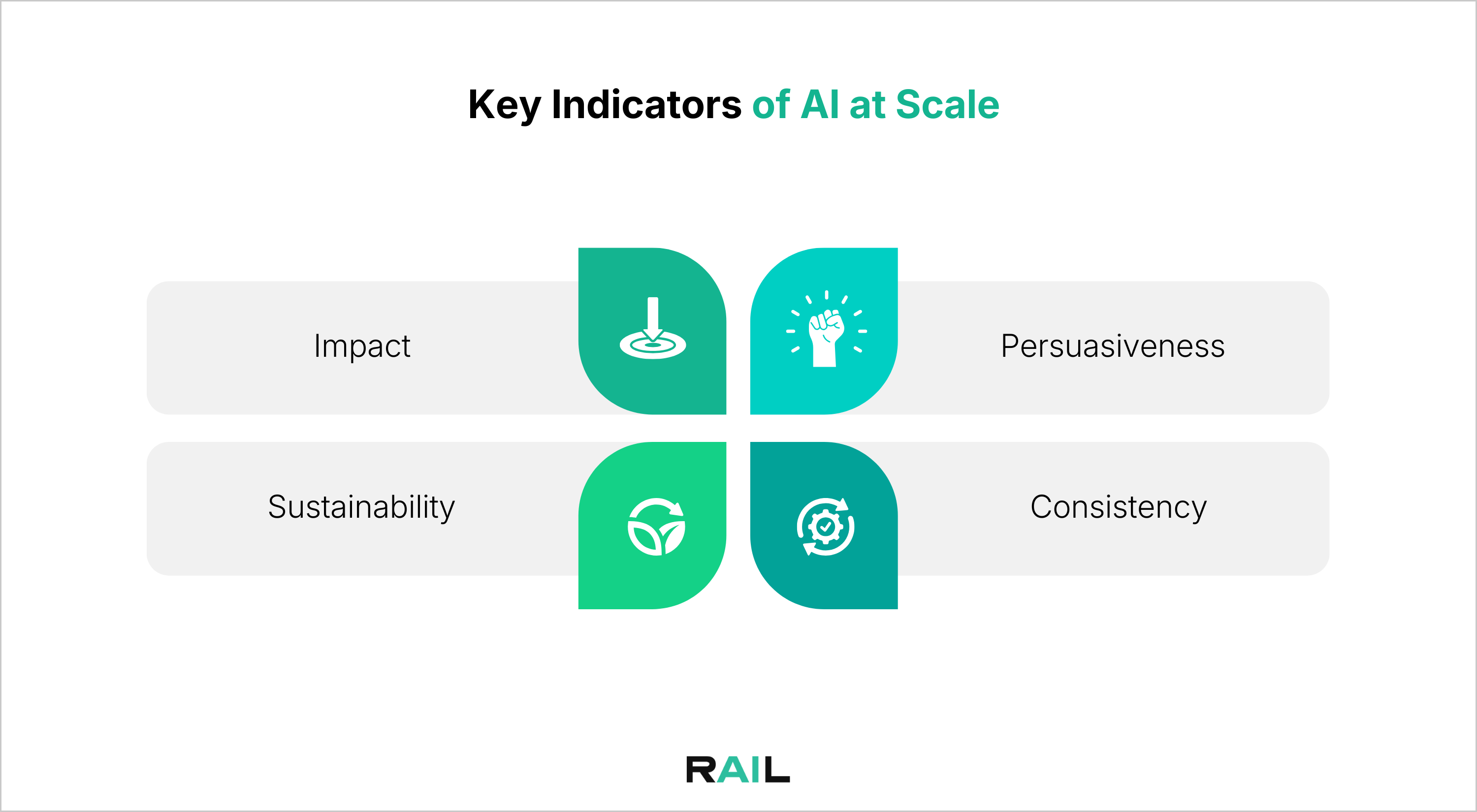

To expand AI use beyond initial pilot programs, companies must focus on four areas simultaneously. Each pillar is interconnected, and neglecting even one can undermine the effectiveness of the entire framework.

Strategic Alignment and Value

This pillar ensures that AI initiatives are driven by business priorities, not experimentation for its own sake.

Scalable AI starts with clearly defined problems and measurable outcomes, such as time saved, reduced errors, or improved customer experiences.

Establishing baseline metrics early allows organizations to track impact accurately and build a strong business case that demonstrates how AI can lower costs or free up resources.

This clarity gives decision-makers the confidence needed to support expansion.

Technical Foundation

A strong technical foundation enables AI systems to operate reliably at scale.

This includes infrastructure that integrates securely with existing enterprise systems, automated data pipelines capable of handling real-time or continuously evolving data, and mature MLOps practices.

Version control, validation, monitoring, and rollback mechanisms prepare teams to meet the operational demands of production-grade AI systems.

Governance and Compliance

This pillar ensures that AI systems remain trustworthy and compliant as they scale.

AI Governance must be embedded into workflows, covering areas such as privacy, bias, explainability, and regulatory oversight. Senior leadership visibility into AI use cases, associated risks, and approval processes is critical.

Robust logging and documentation systems help generate evidence for audits and compliance reporting. Particularly in regulated industries such as financial services, where requirements around Consumer Duty, suitability, and operational resilience are essential.

Organizational Readiness

Scaling AI successfully depends on people as much as technology.

Organizational readiness focuses on clear communication about what is changing, why it matters, and how roles will evolve.

Role-specific training, visibility into early wins, and encouragement to apply AI insights in daily work help build confidence and adoption. When teams feel supported and prepared, AI becomes embedded in decision-making rather than treated as an external tool.

Challenges in Scaling AI And How to Address Them

Several obstacles hinder the effective scaling of AI applications.

Conclusion

Scaling AI in the enterprise is an organizational transformation that touches strategy, infrastructure, governance, and people. While pilot projects can validate ideas quickly, true AI scaling demands disciplined execution across real-world data, systems, and decision-making environments. As AI scaling laws continue to push performance through larger models, post-training optimization, and test-time compute, enterprises must balance innovation with cost, risk, and accountability.

Without responsible foundations, the challenges of scaling AI solutions across operations — ranging from governance gaps to model drift — can quickly outweigh the benefits. Organizations that succeed are those that deliberately align AI initiatives with business value, build scalable technical and governance frameworks, and prepare their teams for sustained adoption. Ultimately, responsible AI is the enabler that allows enterprises to scale AI with confidence, resilience, and trust.